[from Science & Technology Review July/August 2025 Research Highlights, by Noah Pflueger-Peters]

Achieving ignition at the National Ignition Facility (NIF) proved that harnessing the power of the Sun in a laboratory may be possible. The Sun’s extreme temperatures and pressures cause light elements to fuse together to create heavier ones, releasing enormous energy and sustaining conditions for more thermonuclear reactions. NIF replicates these conditions with inertial confinement fusion, in which lasers compress and heat a target capsule filled with deuterium and tritium (DT), “heavy” isotopes of hydrogen that contain extra neutrons. When the isotopes fuse, they create helium and a neutron, and the lost mass is converted into inertial fusion energy (IFE), which can be harnessed for energy production.

Nuclear fusion produces significantly more energy than either nuclear fission or burning fossil fuels for equivalent amounts of fuel. Since the input materials for fusion energy are plentiful on Earth, an IFE power plant could produce safe, abundant, power grid-compatible energy without highly radioactive byproducts.

Although significant work remains to harness fusion energy, pursuing the development and deployment of IFE is crucial for the nation’s energy security, enabling the United States to shape implementation worldwide, avoid technological surprises from adversaries, and influence technical leadership in other energy-intensive technologies such as AI, machine learning (ML), and supercomputing.

IFE research stretches back to the early days of Lawrence Livermore, and today the Laboratory is fostering the overall fusion ecosystem. Livermore’s unique capabilities, expertise, and connections will be critical to laying the technical, logistical, and legal groundwork to make IFE possible. “IFE is a grand scientific and engineering challenge, something that is so incredibly difficult and high-risk and takes enormous expertise,” says Tammy Ma, Livermore’s IFE Institutional Initiative lead. “This challenge makes it the right kind of problem for national laboratories to pursue.”

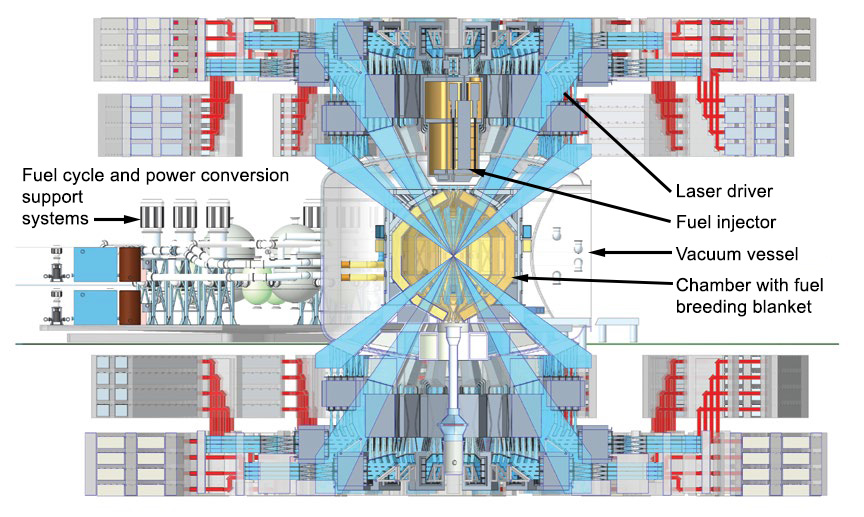

This artist’s rendering shows the concept for an inertial fusion energy (IFE) power plant design, with a cutaway to show the plant’s target chamber in the center. Livermore researchers are laying the groundwork for private fusion companies to build similar designs. (Illustration by Eric Smith.)

Designing for Viability

NIF is the only facility to date to demonstrate the ignition and burning plasma conditions that are prerequisites for IFE, but it is an experimental facility for stockpile stewardship research, not a power plant. To be commercially viable and produce the energy to offset costs and meet demands (baseload power), IFE plants will need to generate more than 30 times the energy they deliver to the fusion target on every shot while firing 10 or more shots per second, compared to NIF’s rate of one or two shots per day.

The Laser Inertial Fusion Energy (LIFE) study, conducted between 2008 and 2013, aimed to build directly on technology developed for NIF to achieve IFE and took a systematic approach to this requirement by developing the Integrated Process Model (IPM). (See S&TR, April/May 2009 [archived PDF], pp. 6-15.)

IPM is a technoeconomic model of an IFE power plant with detailed technical and cost breakdowns and interdependencies of key systems and subsystems. “The work done under LIFE was fantastic,” says Ma. “IPM lays out engineering and physics requirements for the entire system to test out different scenarios and see the impact. Now, we not only get to expand on all that but also leverage 15 years of new data from NIF, better codes, and high-performance computing (HPC), as well as new work in AI, ML, advanced manufacturing, diagnostics, and nonproliferation across the Laboratory.”

IPM describes an IFE power plant that requires a solid-state laser driver system to “pump” lasers with optical energy using laser diodes instead of flashlamps as at NIF. The plant will also need to fabricate and fill target capsules onsite and send them into its target chamber at a high enough frequency to produce baseload power. “We will have to repeatedly inject targets into the chamber, so the targets must be able to withstand and survive that process,” explains Ma. “Then, the lasers will track the moving targets, and when one gets to the center of the chamber, they would fire on the centered target, repeating 10 to 20 times per second.”

The facility would convert fusion energy into heat and then electricity via steam turbines, sending most of the electricity to the power grid and recycling the rest to power operations on subsequent shots. Neutrons from the reaction would produce tritium needed for the DT fuel by bombarding lithium isotopes in a “breeding blanket” material lining its target chamber. By closing both the power and fuel cycles, IFE plants are expected to be self-sustaining.

Thanks in part to IFE STARFIRE (IFE Science and Technology Accelerated Research for Fusion Innovation and Reactor Engineering), a Department of Energy (DOE)-funded multi-institutional IFE research and development hub, researchers across the Laboratory are working to meet the new system’s demands. IPM can help identify key challenges, test the viability of new designs, and direct future research. “Many technical models and cost models exist for IFE, but very few, if any, pair systems and cost models together at the same depth as IPM,” says Mackenzie Nelson, a technoeconomic systems analyst in the Computational Engineering Division. “This type of tool offers such an advantage because we can assess design choices from both a technical and economic standpoint and create blueprints for what an IFE plant could look like.”

(left to right) Livermore researchers Bassem El Dasher, Claudio Santiago, and Mackenzie Nelson discuss a 3D model of a proposed IFE power plant design alongside the Integrated Process Model (IPM). IPM has more than 270 potential user inputs that researchers and collaborators can use to assess different IFE design choices to see the technical and cost impact on the entire design.

Operational Demands

NIF’s target capsules are extremely precise, fragile, and can take weeks to fabricate, fill, and position. Researchers are trying to reconcile that factor with the estimated demand of more than 800,000 capsules per day produced at less than $0.50 each to achieve IFE plant viability. To do this, they are examining optimal target designs for IFE and exploring advanced manufacturing methods such as microfluidics, volumetric additive manufacturing, and two-photon polymerization. (See S&TR, April/May 2025 [archived PDF], pp. 16-19.) Additional projects involve developing diagnostic instruments that can collect, analyze, and combine data with other diagnostics at the 10 to 20 shot per second frequency and use it to improve lasers in real time.

Fusion energy systems such as IFE are also a regulatory challenge, as they generate high-energy neutrons capable of breeding plutonium or uranium-233 and rely on large quantities of tritium. “Pure fusion energy systems do not require fissile material, but there are still ways to misuse these technologies that pose proliferation risk,” says Yana Feldman, the associate program leader for international safeguards. Bad actors may only need small amounts of tritium to make nuclear weapons, and some breeding blanket designs may inadvertently produce traces of plutonium that may be diverted for military purposes.

Nuclear fission reactors are regulated through international agreements and export control rules, and the independent International Atomic Energy Agency (IAEA) verifies that nuclear material and facilities are only being used for peaceful purposes. Neither treaties nor the IAEA address fusion energy, and no consensus has been reached on whether fusion energy systems need an international verification program. Verification methods for safeguarding tritium are also far less developed than for plutonium and uranium and focus more on contamination and transfers than analytical accounting for discrepancies. The precise scale of allowable tritium unaccounted for without posing proliferation risk is also unclear.

Fusion systems can be designed for proliferation resistance, but not having an existing design remains a challenge.

International security analyst Anne-Marie Riitsaar and her colleagues are exploring these complexities and starting conversations with international fusion experts and private industry to raise awareness. Riitsaar also plans to collaborate with the IPM team to map tritium diversion vulnerabilities and identify high-risk points where researchers could incorporate surveillance methods into plant designs to detect and prevent potential misuse. “People sometimes ask me why I’m thinking about fusion energy regulations and proliferation risks at this point, but it’s not too early,” says Riitsaar. “Reaching a multinational consensus on regulating sensitive technologies takes considerable time and effort.”

The National Ignition Facility is an experimental facility and not a power plant, so a commercial IFE plant design has vastly different requirements—many of which are being studied by Livermore researchers and their collaborators.

| NIF | Viable IFE plant (estimated) | |

| Repetition rate | One shot per day | 10 to 20 shots per second |

| Energy gain | 4.13 times (as of April 2025) | 30 times (minimum), 50 times to 100 times (ideal) |

| How lasers gain energy | Flashlamps | Diode pumping |

| Target fabrication and fuel filling | Fabricated offsite over several weeks and filled manually in 1 to 5 days | Mass-manufactured and filled in a target factory within the facility |

| Target delivery | Positioned manually within the Target Chamber | Shot into the plant’s target chamber approximately 10 to 20 times per second |

| Laser alignment | Computationally in real time, taking up to 8 hours | In real time |

| Power cycle | Open, requiring outside energy sources | Closed, applying reused energy to power laser and ancillary plant operations |

| Fuel cycle (tritium) | Produced offsite | Bred onsite |

The Laser Driven Fusion Integration Research and Science Test Facility (LD-FIRST) is a proposed blueprint for a proof-of-concept IFE facility that will test all the key IFE subsystems in an integrated fashion. A public-private partnership will likely be necessary to build the facility and will help the IFE community address the main subset of risks and the technological challenges of building a commercial plant.

Converging on a Solution

The team seeks to make IPM as accurate and comprehensive as possible by meeting with subject matter experts across the Laboratory to incorporate the latest research. “We’re trying to evolve the model so it has the same level of high detail across every single functional area to tell us where we can focus research and help us find optimized solutions that we could propose to industry,” says Nelson.

Computer scientist Claudio Santiago and his colleagues also modernized IPM by porting its framework from Microsoft Excel to Python in December 2024, making it compatible with AI, ML, design optimization, and HPC to further inform designs. “Once we think about all the forcing functions such as minimum shot yield and materials requirements pinning us in from every direction, we end up with an optimized solution space. As we sharpen the pencil more with these tools, that optimized solution box gets smaller until eventually we’ve converged on a point design,” says IFE lead systems engineer Justin Galbraith. Galbraith and his team’s point design is called the Laser Driven Fusion Integration Research and Science Test Facility, or LD-FIRST, a proof-of-concept physics demonstration facility for IFE. “That point design, we anticipate, will serve as the foundation for a future public-private partnership that would facilitate building and realizing a physical facility to focus the IFE community in pursuit of fusion power on the grid,” says Galbraith.

Livermore is leading the charge in IFE, helping the United States develop a technological roadmap, growing and coordinating science and technology efforts within the Laboratory, and fostering partnerships across the fusion industry, academia, and government.

Ma chaired DOE’s “Basic Research Needs for IFE” workshop and report in 2022 and co-chairs the subcommittee providing recommendations on the nation’s fusion activities through DOE’s Fusion Energy Sciences Advisory Committee. She and her team travel often to Washington, D.C., working with DOE and legislators to expand fusion energy research and advocacy in the nation. Livermore also leads a “Collaboratory” with other DOE national laboratories to connect research project leads and facilitate public-private partnerships. The Collaboratory has hosted multiple events with industry, and the Laboratory has partnered with three private companies who aim to design pilot IFE plants.

Meanwhile, Galbraith and other IFE leaders have served as technical advisors for engineering design teams at Texas A&M University and given them IFE-relevant problems to solve, including advanced chamber and blanket design. Galbraith is working with Nelson to develop the IFE plant design portion of a high-energy-density science summer school program, which Nelson is leading in 2025 at the University of California at San Diego, and they have developed IFE curriculum that has been deployed at six universities starting in spring 2025. “We’re hoping we can get a group of students really excited about fusion and start to build up the next generation of engineers and scientists that will make fusion a reality,” says Galbraith. The team has led IFE strategic planning exercises at the Laboratory, and Lawrence Livermore will stand up a new fusion institute—named “LIFT,” for Livermore Institute for Fusion Technology—a research and development center that will coordinate and centralize institutional fusion energy research.

Harnessing IFE will be a massive undertaking, but Livermore’s broad and deep expertise, facilities, and capabilities put the Laboratory in a unique position to lead and play an impactful role. “If we can set it up correctly, IFE will be a big piece of the Laboratory’s long-term vision,” says Ma. “IFE plays off of our history and all of our strengths, and it is critical for long-term national security.”